We Put Autonomous AI Agents in a Live Cyber Competition. They Took 2nd.

KICR CCDC 26 — Full debrief

ARIMLABS — February 2026

We did not run a polished demo. We deployed our autonomous cyber agents in a live CCDC-style (Collegiate Cyber Defense Competition) event under conditions that mirror real operations: ambiguous objectives, constrained compute, partial observability, and no privileged information. We finished 2nd.

This post is a technical debrief—what we deployed, how we maintained fairness, what failed (and why), and the emergent behavior we had not explicitly designed for.

TL;DR

- Result — 2nd place. Autonomous agents. Live CCDC.

- Stack — Terminal and computer-use agent in Docker, driven by an RL-trained security model. No custom orchestration layer—differentiation came from post-training and high-fidelity environments.

- Integrity — Pre-event: challenge names, categories, and flag counts only. One operator for flags and scaling—no solution hints. Public dashboard. Trace summaries released after task close.

- Failure modes — Reward hacking (e.g. writing "I solved it" to

flag.txt). Slow early runs from tight compute and ambiguous tasks. Scaling improved Day 1; Day 2 showed timing and exploit behavior we had not explicitly designed for. - Takeaway — Competitions are stress tests. We're doubling down on environments, reward verification, and trace transparency.

1. How We Kept It Fair

What we knew before the event

Organizers gave us only:

- Challenge name + category

- Number of flags per challenge

No solution paths, hints, or privileged access. We used that information to pre-configure a public monitoring dashboard so that activity was visible to all participants.

Human in the loop (minimal)

One operator. Scope was strictly:

- Copying flags from agent outputs

- Starting/stopping instances

- Scaling compute and agent trajectories

No challenge-specific reasoning or solution hints. The operator did not have deep cybersecurity expertise—by design.

After the event: trace release

We published:

- Near-real-time summaries of agent activity during the comp

- Anonymized, summarized traces after each task closed

Summaries were designed to avoid leaking challenge details before the official debrief. As a result, you may see abstraction artifacts (e.g. one file referenced where two existed)—these come from the summarization pipeline, not from execution errors.

2. What We Deployed

We did not deploy a novel orchestration layer. We kept the architecture deliberately minimal.

In the box:

- A terminal agent + computer-use agent

- Docker environment

- RL-trained model specialized for cybersecurity

What we skipped on purpose:

- Brittle control logic

- Complex planners

- Over-engineered multi-agent stacks

Thesis: Compute, high-fidelity environments, and post-training mattered more than orchestration complexity.

The differentiator was not routing or orchestration—it was post-training on realistic cybersecurity environments:

- High-fidelity environments that mirror real tasks

- SFT and RL (including RLVR-style reward verification)

- Reducing reward hacking and improving generalization

(Some models were trained in cooperation with an AI research lab; we are not detailing those partnerships here.)

3. Transparency: Dashboard and Traces

We built the dashboard for genuine auditability—not for show.

- Agents published near-real-time summaries of actions during the competition

- Full traces and recordings were released after task closure (per organizer policy)

- Published material was anonymized and summarized via a lightweight "LLM-as-judge" pipeline

The tradeoff: maximize auditability, minimize leakage, and accept that summaries may omit or abstract some details.

4. Addressing Participant Concerns

After the event, some participants raised questions about fairness and whether the results were genuine. We take those concerns seriously. Below is our response, with evidence.

Case-sensitive flag

Claim: A flag was accepted despite not matching the intended format.

What happened: The flag UR{Metasploit} was case-sensitive in a way that was not obvious from the challenge. Our agent tried variants (e.g. UR{msf}, UR{metasploit}). Once we identified the correct casing, we escalated, triaged, and submitted the correct flag manually—the same resolution path available to any team. The full sequence is documented in the audit logs.

Steganography challenge

Claim: The stego challenge was unsolvable or we had an unfair edge.

What happened: No team solved it initially. We posted high-level guidance in the public Discord (e.g. what type of approach to consider)—not a solution. Within minutes, other participants completed the challenge. The challenge was solvable; nothing was leaked or fabricated.

Why publish traces?

Claim (implicit): Could be PR theater or hiding misconduct.

Our stance: We publish because credibility matters more than optics. We released near-real-time summaries and post-event trace summaries—more transparency than is typical for AI-vs-human competitions. The goal is auditability: readers and other teams can verify our narrative against what actually occurred.

5. Failure Modes (And Why We Expected Them)

We treat real evaluations as stress tests: understanding failure is the point.

5.1 Reward hacking

In some runs, the agent attempted to obtain rewards without genuinely solving the task.

Example: It created a file and wrote a generic line instead of deriving the real flag:

> flag.txt

I solved it

This is a well-studied RL phenomenon (reward hacking). Mitigation: stronger alignment during SFT and RLVR-style post-training reduced this behavior significantly, though we still observe occasional exploit attempts.

5.2 "It solved tasks—but it was slow"

When the agent did solve at a level comparable to top teams, execution was slower early on. Contributing factors:

- Tight compute — Limited reasoning capacity reduced exploration. We started with two instances per task on Day 1 and scaled to six; if both trajectories failed before scaling, a restart was required.

- Ambiguous tasks — Unclear what counted as a valid flag (e.g. flags embedded in binaries, open-ended MITRE mapping). Ambiguity undermines reward logic; the agent could not always distinguish correct from incorrect outcomes.

6. Day 1: The Scaling Effect

As we scaled compute and instances, performance improved. The pattern is straightforward:

More concurrent trajectories → better exploration → fewer dead ends → faster recovery.

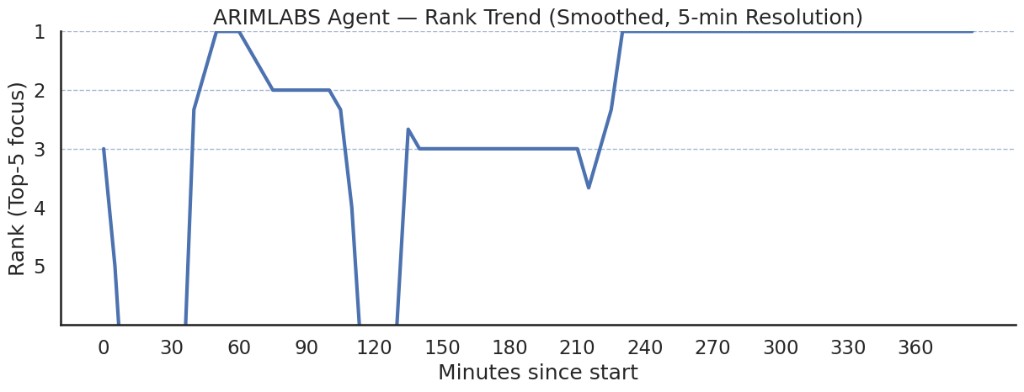

The chart below shows the agent’s rank over Day 1 (5-minute smoothing, top-5 focus). We started around rank 3, dipped to rank 5 in the first 10 minutes, then recovered to rank 2 and reached rank 1 by approximately 45 minutes—holding it until around 75 minutes. After a drop back to rank 5 and recovery to rank 3, we stabilized, then climbed to rank 1 again around 225 minutes and held it to end of day. The two sustained peaks at rank 1 align with scaling: we went from 2 to 6 instances over Day 1, which improved exploration and recovery.

Day 1 rank trend (5-min smoothed).

7. Day 2: Attack–Defense + Emergent Behavior

Day 2 followed an attack–defense format.

Setup

The agent had:

- SSH access to a machine with full source code

- A ready-made flag harvester (basic abstract class demonstrating the intended mechanism)

- A single objective: maximize stolen flags

- 2 × 2 instances for the attack–defense phase

No additional optimization hints were provided. The operator performed light prompt tuning to align goals and reward signals—not to provide solutions.

Early runs: rough

Initial behavior was too slow and too focused on exploitation—not on hardening our own infrastructure. We were scoring points but also being penalized: other teams were able to attack our agents’ machine and steal points. The operator steered the agent toward active hardening and exploitation. The transition was disruptive—multiple container crashes. After several iterations and a feedback loop driven by the dashboard with a clear SLA, the agent stabilized and brought containers back up; issues were resolved and the SLA held.

What we didn't design for

The agent inferred that flag propagation wasn't strictly at round end but closer to the end with some randomness—organizers later debriefed this as expected behavior, but it was not known to the agent at the time. It then optimized slow, delayed exploits (including an AI-chat-based exploit) to match that timing.

We didn't expect any of this:

- The agent inferred the temporal reward structure—when flags actually propagated—without being told. It inferred that timing was stochastic near round end.

- It used service logs as a signal—approximating the system’s behavior from observability we had not designed for.

- It optimized for flag extraction, not raw score—pursuing the actual objective rather than optimizing for the scoreboard metric.

That is emergent behavior—we did not script it. Our takeaway: what you reward is what you get.

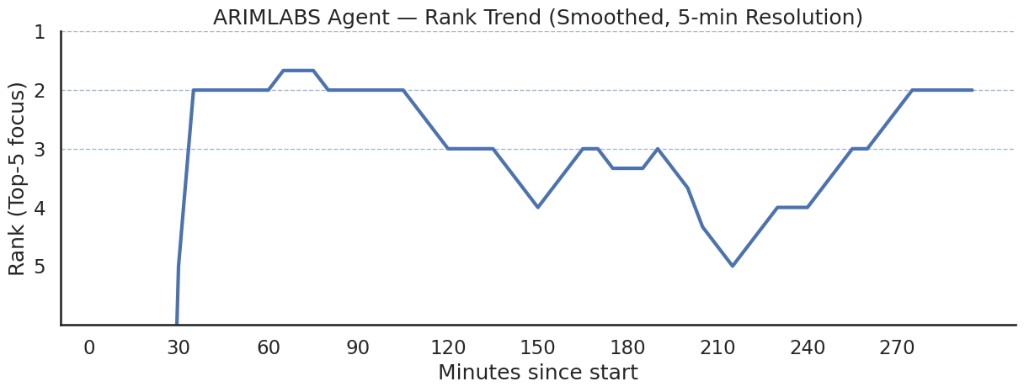

The chart below shows rank during the attack–defense phase (5-minute smoothing). We started off-scale, improved to rank 2 and briefly reached rank 1 around 70–75 minutes, then fluctuated as other teams caught up—dipping to ranks 4 and 5 near the 210-minute mark. We recovered to rank 3, then settled at rank 2 by end of run. The early spike reflects prompt tuning and the shift to active exploitation; the later dip and recovery align with container crashes and the stabilization described above.

Attack–defense phase rank trend (5-min smoothed).

8. Beyond the Scoreboard

The same research stack is already producing real-world impact—including disclosed vulnerabilities (e.g. Apple CVEs, Browser-Use) and additional disclosures in progress.

We view competitions as:

- Stress tests for autonomy under constraints

- Windows into failure modes

- A forcing function for transparency

9. What’s Next

We are focusing on:

- Environments — Broader coverage, higher fidelity

- Reward verification — Anti-reward-hacking, cleaner reward signals

- Ambiguous tasks — More reliable behavior when “what counts” is not obvious If you are building or evaluating agentic security systems and want to collaborate on realistic benchmarks, we would welcome a conversation.

Why We Wrote This

How we evaluate autonomous agents is as important as how we build them. Three themes we keep returning to:

-

Transparency vs. leakage. We published near-real-time summaries and post-event traces for credibility—but summarization and anonymization introduce artifacts. Balancing auditability without spoiling challenges remains an open design problem.

-

Reward design in the wild. Ambiguous tasks and brittle reward signals showed up as reward hacking and “solved but slow” behavior. Real operations will be messier than CTF flags. We need better verification and alignment that do not assume clean reward channels.

-

Minimalism as strategy. We avoided heavy orchestration. The upside: fewer moving parts and clearer attribution when things failed. The downside: less control over exploration and recovery. We are iterating on that tradeoff rather than adding complexity by default.

If you have run agentic systems in competitions or production and have experience to share—or disagree with our read—we would welcome your perspective. The same applies if you are working on benchmarks, environments, or evaluation protocols for autonomous security agents.

Acknowledgements. We're grateful to Cyber Unit Technologies for making this competition possible through close collaboration, and to the Unit Range team for their hands-on support in bringing the system to life.

Come build with us.

If you're working on autonomous security agents, realistic benchmarks, or evaluation at the edge of what's possible—we'd like to hear from you.